|

I'm working on expanding the storage backend for several VMware vSphere 5.5 and 6.0 clusters at my datacenter. I've primarily used NFS datastores throughout my VMware experience (Solaris ZFS, Isilon, VNX, Linux ZFS), and may introduce a Nimble iSCSI array into the environment, as well as a possible Tegile (ZFS) Hybrid array.

The current storage solutions are Nexenta ZFS and Linux ZFS-based arrays, which provide NFS mounts to the vSphere hosts. The networking connectivity is delivered via 2 x 10GbE LACP trunks on the storage heads and 2 x 10GbE on each ESXi host. The switches are dual Arista 7050S-52 top-of-rack units configured as MLAG peers.

On the vSphere side, I'm using vSphere Distributed Switches (vDS) configured with LACP bonds on the 2 x 10GbE uplinks and Network I/O Control (NIOC) apportioning shares for the VM portgroup, NFS, vMotion and management traffic.

This solution and design approach has worked wonderfully for years, but adding iSCSI block storage is a big shift for me. I'll still need to retain the NFS infrastructure for the foreseeable future.

I would like to understand how I can integrate iSCSI into this environment without changing my physical design. The MLAG on the ToR switches is extremely important to me.

Pantalla fondos de pantalla con movimiento 3d fondos para mac imagenes 1145x774. Cinco pginas para descargar espectaculares fondos de pantalla en HD 640x360 View. Descargar Wallpaper Original 1024x768 1024x768. Wallpapers Cristianos Gratis. Recent galleries: Jimmie Johnson 2016 Wallpaper. Fondos de pantalla religiosos cristianos en HD 2560x1600. Fondos de pantalla en HD calidad extrema para WideScreen Super HD Screen Los fondos son en muy alta calidad por favor espere puede ser que demora en cargar Les recomiendo escuchar este tema mientras que miran los fondos +2. 0 No hay comentarios. Anuncie en Taringa! Fondos de pantalla full hd. Fondos Cristianos. Los Mejores Fondos Cristianos Seleccionados de todo Internet. Fondos de pantalla HD cristianos gratis Pcrist 1920x1200 View. 147 Wallpapers o Letreros Cristianos para descargar gratis 1280x1024 View. Wallpapers Cristianos GRATIS Imagenes Postales y Tarjetas 515x294 View. Home Wallpaper cristianos gratis Wallpapers Cristianos Para Bendecirte 1280x720.

Given the above, how can I make the most of an iSCSI solution?

Esxi Iscsi Multipathewwhiteewwhite

176k8080 gold badges380380 silver badges734734 bronze badges

1 Answer

I wouldn't recommend running iSCSI over LACP as there really is no benefit to it over basic link redundancy.

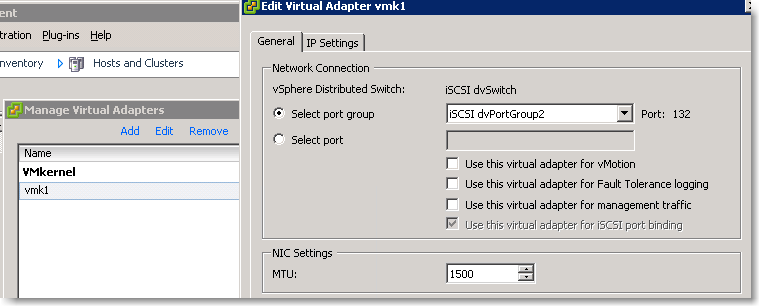

Creating VMkernel switches for iSCSI on your vDS with software iSCSI HBA is exactly what you should do. This will give you true MPIO. This blog post seems somewhat relevant to what you are trying to do ignoring the need to migrate from standard switches: https://itvlab.wordpress.com/2015/02/14/how-to-migrate-iscsi-storage-from-a-standard-switch-to-a-distributed-switch/

You should not need to add more network adapters if you already have two for iSCSI. I would however recommend that you enable jumbo frames (MTU 9000) on your iSCSI network. This has to be set at all levels of the network such as VMkernel, vDS, physical switches, and SAN appliances.

TimTim

Not the answer you're looking for? Browse other questions tagged networkingvmware-esxivmware-vsphereiscsilacp or ask your own question.

The vSphere Distributed Switch (VDS) is a powerful, but often misunderstood technology that is included with VMware vSAN. This post will review some of my favorite settings on the VDS, and how you can use them to get better control, performance, and visibility into your virtual SAN cluster. While it is most known for the ability to create port groups that exist on all hosts with a simple click, it also has a lot of lesser known but incredibly powerful functions that will aid the vSAN administrator.

LLDP/CDP There’s a lot to like in the VDS when deploying a new vSAN cluster or trying to identify any issues. My favorite undercooked setting is the ability to configure Link Layer Discovery Protocol (LLDP) and Cisco Discovery Protocol (CDP) for both send and receive. The standard switch is limited to only receiving CDP. What this means is that not only can a host pull information from switches and “identify” what switch/port/vLAN it is plugged into, but also the network administrators can identify which hosts are plugged into which physical switches. Having watched hours of time wasted on “Fixing” the wrong port this small setting makes sure that everyone is on the same page about where things have been plugged in. This helps also find documentation errors, or incorrectly cabled hosts quickly and easily.

NetFlow and IPFIX allow the inspection of IP traffic information by sending them to a remote collector from VMware vRealize Network Insight as well as 3rd parties such as Solarwinds, Netflow Logic and CA. These tools allow you to from an out of band perspective peer into the traffic entering and leaving a virtual machine for monitoring, management and security purposes. For a quick video on how to set it up click here. This helps you understand not only how much traffic, but where it is going.

vSphere Network I/O Control (NIOC) can be used to set quality of service (QoS) for Virtual SAN traffic over the same NIC uplink in a VDS shared by other vSphere traffic types including iSCSI traffic, vMotion traffic, management traffic, vSphere Replication (VR) traffic, NFS traffic, Fault Tolerance (FT) traffic, and virtual machine traffic. General NIOC best practices apply with Virtual SAN traffic in the mix:

Specifically, for Virtual SAN, we make the following recommendations:

LAG – As I discuss in the “using multiple interfaces” blog post, there are more options for use of multiple interfaces. the VDS supports LAG with a huge variety of advanced hashes, as well as Dynamic LACP which if a switch has been misconfigured will cleanly fail to a working state. For a video of how to configure LAG see this link. While not everyone need LAG if you are going to use it the added functionality for the VDS makes it a better option.

Misconceptions about the VDS

A common concern are around how VDS will behave if vCenter fails. It should be noted that virtual machines using a VDS will continue to run uninterrupted in the event the vCenter is unavailable. You will loose the ability to configure or add Virtual Machines to the switch, but they will continue to operate. If you foresee a position where you may need to add a virtual machine to a management VLAN/PortGroup in the event of a failure you can pre-stage a Ephemeral Port group. This would allow you to rebuild a dependency (such as the vCenter database) that is stored within the environment without having a chicken/egg problem or the need to keep a standard switch for management functions.

Another concern is often tied to licensing as historically the VDS requires enterprise plus licensing. It should be noted that all editions of vSAN include the VDS so there is no excuse to not use it with VSAN!

After a long break I am back again on this forum. Over the last couple of months I was busy interacting with various customers as part of the VMworld US and Europe conferences and trying to understand how customers are deploying distributed switch in their environment. I have also spent lot of time internally talking to VMware R&D engineers as well as Field experts (SEs and PSOs) on what guidance we would like to provide to our customers regarding the deployment of vSphere Distributed Switch (VDS). After all these discussions, I have come up with some guidelines or best practices that customers have to consider while deploying VDS. These guidelines or best practices are discussed with reference to a sample example deployment. It is important to note that the suggestions/guidelines discussed here are based on the assumptions of the example deployment. Every customer environment is different as well as customer needs are different, and customers have to take that into account while designing the virtual network infrastructure using VDS. VDS is a flexible platform that can support different requirements from different customers.

So let’s first dive into the different design considerations that you should consider before you go and start deploying VDS in your environment.

Design ConsiderationsCisco Network Switches

Three main aspects influence the design of a virtual network infrastructure:

1) Customer’s infrastructure design goals

2) Customer’s infrastructure component configurations

3) Virtual infrastructure traffic requirements

Let’s take a look at each of these aspects in a little more detail.

Billow x200ik battery buy 2. Welcome to, the home for vaping on reddit!

Infrastructure design Goals

Customer’s want their network infrastructure Available 24/7, Secure from any attacks, and Perform efficiently throughout the day-to-day operations. In the case of a virtualized environment these requirements become much more demanding as more and more business critical applications run on consolidated environment. These requirements on the infrastructure translates into design decisions that should incorporate following best practices for virtual network infrastructure:

Infrastructure component configurations

In every customer environment, the compute and network infrastructure used differ in terms of configuration, capacity, and feature capabilities. These different infrastructure component configurations influence the virtual network infrastructure design decisions. The following are some of the configurations and features that administrators have to look out for.

It is impossible to cover all the different virtual network infrastructure design deployments based on the various combinations of type of servers, network adapters and network switch capability parameters. In this paper, the following four commonly used deployments are described that are based on standard rack server and blade server configurations:

It is assumed that the network switch infrastructure has standard layer 2 switch features (High availability, redundant paths, fast convergence, port security) available to provide reliable, secure and scalable connectivity to the server infrastructure.

Virtual Infrastructure traffic

VMware vSphere virtual network infrastructure carries different traffic types. To mange the virtual infrastructure traffic effectively, vSphere and network administrators have to understand the different traffic types and their characteristics. The following are the key traffic types that flow in the VMware vSphere infrastructure along with their traffic characteristics:

The following Table 1 summarizes the characteristics of each traffic type

Table 1 Traffic Types and characteristics

To understand the different traffic flows in the physical network infrastructure, network administrators use Network Traffic management tools. These network management tools help monitor the physical infrastructure traffic but do not provide visibility into virtual infrastructure traffic. With the release of vSphere 5, VDS now supports the NetFlow feature. The NetFlow feature allows exporting the internal (VM to VM) virtual infrastructure flow information to standard network management tools. Administrators now have the required visibility in to virtual infrastructure traffic. This helps administrators monitor the virtual network infrastructure traffic through a familiar set of network management tools. Customers should make use of the network data collected from these tools during the capacity planning or network design exercises.

Example Deployment components

After looking at the different design considerations, this section provides the list of components that are used in an example deployment. This example deployment is used to help illustrate some standard VDS design approaches. The following are some common components in the virtual infrastructure. The list doesn’t include storage components that are required to build the virtual infrastructure. It is assumed that customer will deploy IP storage in this example deployment.

Hosts

Four ESXi hosts providing compute, memory and network resources according to the configuration of the hardware. Customers can have different number of hosts in their environment based on their needs. One VDS can span across 350 hosts. This capability to support large number of hosts provides the required scalability to build a private or public cloud environment using VDS.

Clusters

A cluster is a collection of VMware ESXi hosts and associated virtual machines with shared resources. Customers can have as many clusters in their deployment as required. With one VDS spanning across 350 hosts, customers have the flexibility of deploying multiple clusters with different number of hosts in each cluster. For simple illustration purpose two clusters with two hosts each are considered in this example deployment. One cluster can have maximum 32 hosts.

vCenter Server

VMware vCenter server centrally manages a VMware vSphere environment. Customers can manage VDS through this centralized management tool. vCenter Server can be deployed on a virtual machine or a physical host. The vCenter Server is not shown in the diagrams but customers should assume that it is present in this example deployment. vCenter Server is only used to provision and manage VDS configuration. Once provisioned, hosts and virtual machines network operate independent of vCenter Server. All components required for network switching reside on ESXi hosts. Even if the vCenter Server fails, the hosts and virtual machines will still be able to communicate.

In the deployment where vCenter Server is hosted on a virtual machine, customers have to pay more attention to the network configurations. In such deployments, if the networking for virtual machine hosting vCenter server is misconfigured, then the network connectivity of vCenter Server is lost. This mis-configuration must be fixed. However, you need vCenter Server to fix the network configuration because only vCenter Server can configure a VDS. As a workaround to this situation, customers have to reconnect the virtual machine hosting vCenter Server to a standard vSwitch using vSphere Client that is directly connected to the host. To do this, a VSS (vSphere standard switch) must be on a host that is also connected to the management network of hosts.

Network InfrastructureEsxi Distributed Switch

Physical network switches in the access and aggregation layer provide connectivity between ESXi hosts and to the external world. These network infrastructure components support standard layer 2 protocols providing secure and reliable connectivity.

Along with the above four components of the physical infrastructure in this example deployment, some of the virtual infrastructure traffic types are also considered during the design. The following section describes the different traffic types in the example deployment.

Virtual Infrastructure Traffic Types

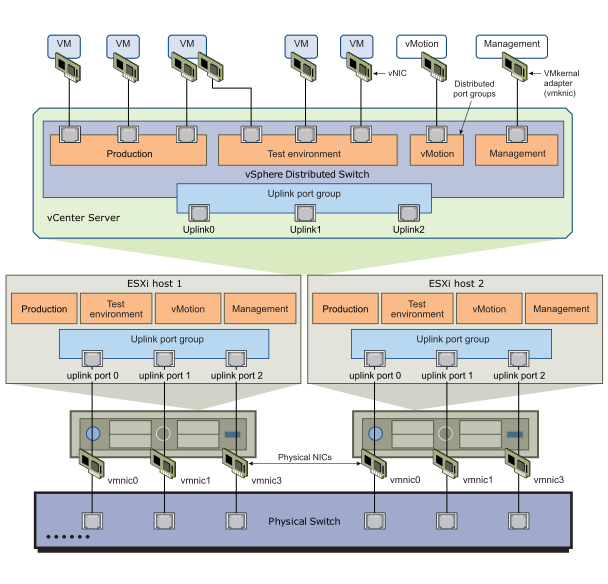

In this example deployment, there are standard infrastructure traffic types that include iSCSI, vMotion, FT, Management along with virtual machine traffic type. Customers may have other traffic types in their environment based on the choice of the storage infrastructure (FC,NFS,FCoE). The diagram in the Figure 1 below shows the different traffic types along with associated port groups on an ESXi host. It also shows the mapping of the network adapters to the different port groups.

Figure 1 Different Traffic types running on a Host

After covering the different design considerations and the example deployment, we will turn our attention to the different VDS design options around the example deployment. In the next blog entry I will cover the important virtual and physical switch parameters that customers should consider in all these design options.

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed